Independent service · sold by engagement, not subscription

When performance is disputed, evidence matters.

LumeTrax® Audit & Assurance — independent technical reviews, bankability-grade. Pre-COD readiness. Post-COD performance verification. OEM claim review. Lender and investor reporting. Built on operating data — not on inspector assumptions.

Engagement fees, not subscription. We work for the asset, the owner, and the lender — not for the OEM under review.

An independent voice between the OEM, the EPC, the operator, and the lender.

A renewable asset accumulates technical opinions across its life. The EPC has a view. The OEM has a view. The O&M contractor has a view. The asset owner has a view. The lender has a different one. When those views disagree — at COD, at warranty claim, at refinancing, at insurance assessment — somebody has to write the version of events the contract treats as authoritative.

The traditional version of that work runs on site walks, paper records, and a small number of operating-data exports requested from each counterparty. The output is competent but slow, and the data underneath it is whatever each counterparty chose to share.

Audit & Assurance is built differently. Reviews run on the asset's actual operating data — historian, alarm record, work-order trail, downtime classification, performance attribution — accessed through the LumeTrax data model. The deliverable is a technical opinion the lender, the insurer, or the contract counterparty can rely on, with every figure source-linked back to the underlying record.

Four standard scopes. Custom scopes by quotation.

Pre-COD readiness review

- Scope

- As-built verification against design, single-line and protection-setting validation, alarm-philosophy review, control-system commissioning evidence, baseline performance under representative conditions, evidence-pack handover.

- Audience

- Asset owner, EPC, lender's IE

- Turnaround

- 4–6 weeks

- Output

- Pre-COD readiness report + COD evidence pack

Post-COD performance verification

- Scope

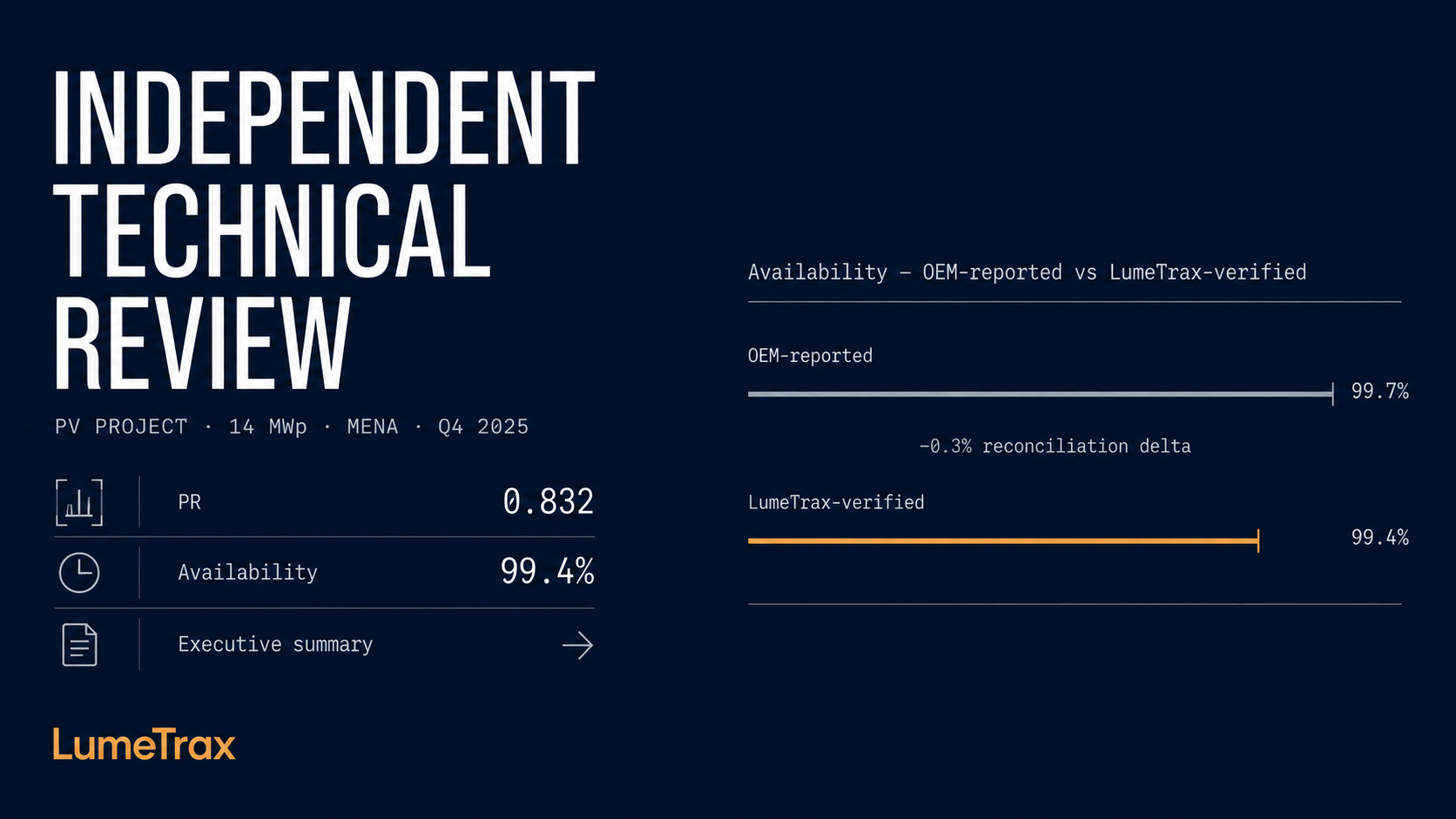

- Performance Ratio, availability, specific yield, capacity factor — verified against weather-normalised expectations and contract definitions. Loss attribution per cause. Reconciliation of OEM-reported figures against operating-data-derived figures.

- Audience

- Asset owner, lender, insurer, asset manager

- Turnaround

- 4–6 weeks

- Output

- Performance verification report + reconciliation table

OEM claim review

- Scope

- Forensic review of the claimed event, time-bounded operating-data extraction, alarm and control-action chronology, third-party-attributable cause analysis, damages calculation against contract definitions.

- Audience

- Asset owner, owner's counsel, OEM, dispute resolution

- Turnaround

- 6–10 weeks (scope-dependent)

- Output

- Claim-review memorandum + supporting evidence pack

Lender / investor reporting

- Scope

- Defined cadence (monthly, quarterly, semi-annual). Covenant-relevant operating metrics, downtime classification, recoverable-vs-permanent loss, OPEX-per-MWp trends, year-over-year comparability.

- Audience

- Lender, investor, asset-management committee, board

- Turnaround

- Per cadence, ~1 week pack-assembly

- Output

- Standardised reporting pack — same format, every period

Why a LumeTrax review reads differently

Every figure in every deliverable is classified by source.

Lender-grade reviews fail when the deliverable blurs the line between what the data says, what the methodology computes from it, what the assumptions were, and what the reviewer judged. Audit & Assurance reports never blur the line.

| Source class | Definition | Example |

|---|---|---|

| Measured | Direct historian / metering / alarm-event record. Auditable to timestamp + source ID. | Energy delivered (MWh), inverter output time-series, breaker trip event |

| Calculated | Derived from measured data via documented methodology. The formula is published. | Performance Ratio (PR), capacity-weighted availability, loss-attribution waterfall |

| Assumed | Required input where measurement isn't available; assumption is named, sourced, and bounded. | OEM-published efficiency curve, design-stage soiling-rate assumption |

| Judged | Reviewer interpretation where data is contested, ambiguous, or insufficient. The reviewer signs the judgement. | Cause attribution where two competing explanations are partially supported |

When a lender's IE asks "is this number measured, calculated, assumed, or judged?" — the answer is one row in the report. Disagreements between counterparties typically resolve at the assumption-or-judgement layer, where they belong.

Independence is structural — and screened

Engagements are scoped to remove structural favourability before acceptance.

Every engagement runs through a conflict-screening checklist before the scope of work is signed.

- The OEM, EPC, or operator under review is named at engagement initiation.

- LumeTrax discloses any commercial relationship with the named counterparties — even indirect.

- Where a structural conflict is identified, LumeTrax either declines the engagement or scopes it to remove the conflict.

- The screening outcome is documented in the engagement letter, not in side-correspondence.

- The customer's right to challenge the screening outcome is contractual.

Audit & Assurance does not represent OEMs in performance disputes. We do not accept engagements where our position is structurally favourable to the equipment under review. The screening process is the mechanism that enforces both.

Independent of the OEM under review. Aligned to the contract being measured against.

"Independent" is a word that gets thinned out. We tighten it up like this: engagements are accepted only where the reviewer's position is structurally neutral to the equipment, the EPC, and the operating party under review. The screening process (next section) is the mechanism that enforces it. LumeTrax reviewers are senior renewable-energy engineers selected for operational depth and structural independence from OEM, EPC, and IPP commercial relationships.

What independence is not: distance from the asset. The opposite. The reviewer reads the as-built single-line, the historian record, the alarm philosophy, and the work-order trail in detail. Independence comes from how the review is conducted, who pays for it, and where the methodology is documented — not from how little the reviewer knows.

The version a lender opens. Section by section.

An anonymised sample deliverable — real methodology, synthetic numbers, no real customer name — is available below.

| Section | Contents |

|---|---|

| 1. Executive summary | Headline performance against contract, key findings, source-classified |

| 2. Methodology + definitions | KPI definitions, calculation logic, data sources, source-class glossary |

| 3. Asset overview | As-built configuration, capacity register, contract structure (anonymised) |

| 4. Performance metrics | PR, availability, specific yield, capacity factor — measured and calculated, weather-normalised |

| 5. Loss attribution | Waterfall analysis, recoverable-vs-permanent classification, source-linked per bucket |

| 6. Counterparty allocation | OEM warranty / EPC / O&M / weather / grid / curtailment — judged where data is ambiguous |

| 7. Reconciliation | OEM-reported figures vs. operating-data-derived figures, with explanation of any delta |

| 8. Recommendations + risks | Operationally actionable; bounded by what data and methodology actually support |

| 9. Appendices | Source links, methodology references, conflict-screening outcome, reviewer credentials |

Pricing

Indicative engagement fees.

| Engagement | Starting fee | What's included |

|---|---|---|

| Site Review | from $7,500 / site | Standard scope (pre-COD readiness OR post-COD verification at a single site). Methodology, data extraction, on-site validation visit, deliverable. Typical turnaround 4–6 weeks. |

| Bankability Review | from $18,000 / site | Lender-grade scope. Includes Site Review work plus full reconciliation against contract performance guarantees, lender-format reporting pack, and presentation to lender / investor stakeholders. Typical turnaround 8–10 weeks. |

| Portfolio Reporting | quotation | Recurring lender / investor reporting across multiple plants. Cadence and format agreed at engagement. |

| OEM Claim Review | quotation | Scope dependent on dispute scale, evidence volume, and counterparty timeline. |

Indicative starting fees only. Final pricing depends on plant capacity, data availability, scope, turnaround requirements, and counterparty topology. Travel and on-site validation costs reimbursed at standard rates. Audit & Assurance is sold as engagement fees — not as a subscription. Engagements run alongside any LumeTrax platform subscription the asset already has, and use the platform's data where available. They can also run on a non-LumeTrax-instrumented asset, with longer lead time for data extraction.

Frequently asked questions

- Lender acceptance is a function of the lender, the contract, and the documentation provided. The deliverable is built to the bar a lender would set — defined methodology, source-classified figures, period-over-period comparability, presentation in a format that fits a credit committee paper. Sample report available on request.

- Yes. Engagements can run on third-party-instrumented or partly-instrumented assets via standard data extraction (historian export, OPC UA, MQTT, OEM APIs). Lead time is longer because the data path is reconstructed for the engagement; the methodology and deliverable format are unchanged.

- Audit & Assurance reports on the asset's performance, not on the platform's. Reviewers cite operating data, not platform behaviour. Where a customer prefers a third-party reviewer for the optics, we'll say so — and recommend one. Independence is a structural property; we treat any conflict honestly rather than papering over it. The conflict-screening process documents the scoping outcome.

- Yes, both orderings work. The engagement is contracted independently from the platform subscription and can run before, alongside, or after any platform decision.

- A named senior reviewer, with their credentials disclosed. The deliverable is reviewable, defensible, and citable.

- A standard Site Review runs 4–6 weeks from kick-off to delivered report. A Bankability Review runs 8–10 weeks. Portfolio reporting runs on its agreed cadence. Custom scopes (OEM claim, dispute support) sized at engagement.

- Yes — every figure is classified by source class. This is the methodology property that makes the deliverable defensible to a lender's IE or a dispute-resolution panel.

Audit & Assurance reads from the same data the platform produces

Continue exploring.

Engagement